Issue #19: How to read ML papers

(Without a PhD in mathematics )

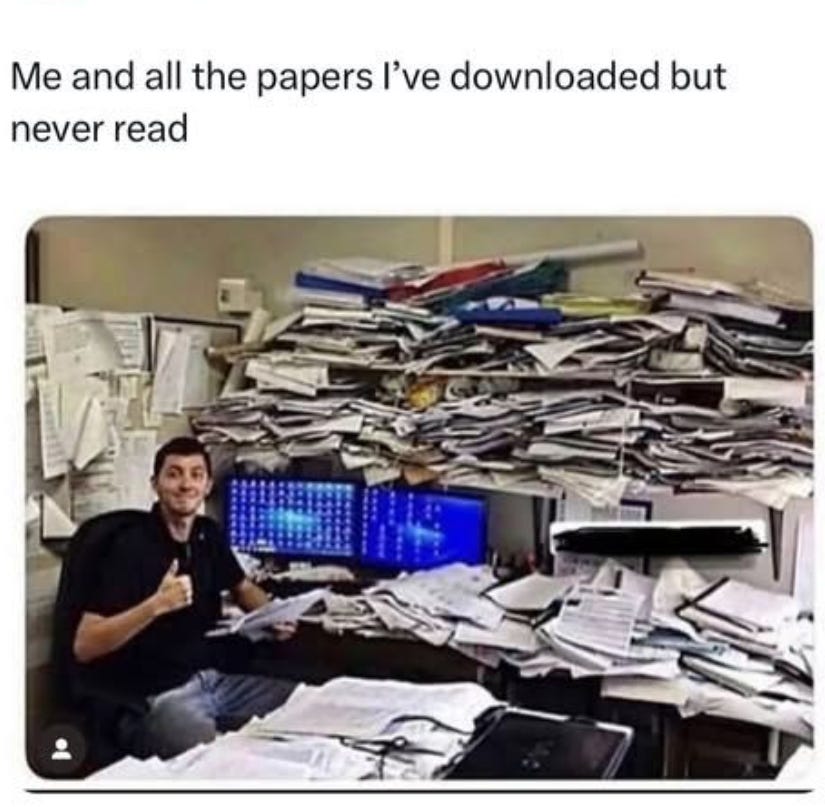

ML papers terrify you.

You open a PDF. You see a wall of Greek symbols, dense equations, and references to theorems you’ve never heard of.

You close it within 30 seconds and you tell yourself:

“I’m not ready for this. Maybe once I learn more Math.”

That day never comes.

Here’s the truth: you don’t need a PhD to read ML papers.

I would recommend my 5-step process that takes you from “I can’t read this” to reading 2-3 papers per week.

Step 1: Stop reading top to bottom

This is the biggest mistake. Papers are not books and reading them linearly is the slowest way to understand them.

Instead, read in this order:

Title + abstract (30 seconds, decide if it’s worth your time)

Figures and tables (2 minutes, this is where the actual results are)

Last 2 paragraphs of the introduction (the contribution summary)

Method section (only the parts relevant to what you need)

Skip proofs entirely

80% of a paper’s value is in the abstract, figures, and method section. The rest is context for reviewers, not for you.

Step 2: Realize the math is the same every time

Here’s what most people don’t expect.

After 10-15 papers, the same operations appear over and over:

→ Matrix multiplication and transposition

→ Dot products and norms

→ Derivatives and gradients

→ Softmax and argmax

→ Summation and expectation

→ Argmin over a loss function

That’s it. That’s 80% of all Math you’ll encounter in ML papers.

You don’t need to know all of mathematics. You need to know these 10-15 operations deeply.

Step 3: Build a notation cheat sheet

Every time you see a symbol you don’t recognize, write it down.

Here’s what a typical cheat sheet looks like after ~50 papers:

→ θ = model parameters

→ L = loss function

→ ∇ = gradient

→ ∈ = “belongs to”

→ ∀ = “for all”

→ ||·|| = norm (length of a vector)

→ ~ = “sampled from”

→ E[·] = expected value

After 20 papers, you stop seeing new symbols. The notation becomes a language you can read fluently.

Step 4: Implement the key equations

This is the step that separates people who read papers from people who understand them.

After reading, ask yourself: “What are the 3-5 equations that define this method?”

Then try implementing only those using just NumPy.

Step 5: Start with the right papers

Don’t start with a 40-page theory paper. Start with these:

“Attention Is All You Need”

“Word2Vec: Efficient Estimation of Word Representations in Vector Space”

“ImageNet Classification with Deep Convolutional Neural Networks”

1 paper per week. In 3 months, reading papers becomes routine.

The uncomfortable truth:

ML papers aren’t written to be easy to read. They’re written to pass peer review.

But the actual ideas inside them? Usually simple,elegant,and implementable in 20-50 lines of code.

Although it seemed so, barrier was never your math background.

It was not having a system.

You don’t need a PhD to read ML papers.

You need a system: skip to the abstract and figures first, learn the same 10–15 math operations that repeat everywhere, build a notation cheat sheet, implement the key equations in NumPy, and start with accessible papers like “Attention Is All You Need.” 1 paper a week. In 3 months, it’s routine.

Thanks for reading this week’s issue.

Until next week’s issue, keep learning, keep building, and keep thinking like a mathematician.

-Terezija

P.S. If this issue helped you feel less intimidated by research papers, forward it to a friend who’s been avoiding them.

That’s how Math Mindset grows, 1 reader at a time.